Finding The Gradient Of A Function

listenit

Apr 03, 2025 · 6 min read

Table of Contents

Finding the Gradient of a Function: A Comprehensive Guide

The gradient is a fundamental concept in vector calculus with wide-ranging applications in various fields, including machine learning, physics, and computer graphics. Understanding how to find the gradient of a function is crucial for anyone working with multivariable calculus. This comprehensive guide will delve into the intricacies of gradient calculation, explore its geometrical interpretation, and illustrate its applications through practical examples.

What is the Gradient?

The gradient of a scalar-valued function of several variables is a vector field whose value at each point in the domain of the function is a vector. This vector points in the direction of the greatest rate of increase of the function at that point, and its magnitude is the rate of increase in that direction. In simpler terms, the gradient tells us which direction to move to increase the function's value most rapidly and how steep that increase is.

For a function of two variables, f(x, y), the gradient is denoted as ∇f(x, y) and is defined as:

∇f(x, y) = (∂f/∂x, ∂f/∂y)

where ∂f/∂x and ∂f/∂y represent the partial derivatives of f with respect to x and y, respectively. The partial derivative with respect to x measures the rate of change of f as x varies while holding y constant, and vice-versa for the partial derivative with respect to y.

Similarly, for a function of three variables, f(x, y, z), the gradient is:

∇f(x, y, z) = (∂f/∂x, ∂f/∂y, ∂f/∂z)

Calculating the Gradient: Step-by-Step Guide

Calculating the gradient involves finding the partial derivatives of the function with respect to each of its variables. Let's illustrate this with a few examples.

Example 1: A Simple Function of Two Variables

Let's find the gradient of the function f(x, y) = x² + y².

-

Find the partial derivative with respect to x:

∂f/∂x = 2x

-

Find the partial derivative with respect to y:

∂f/∂y = 2y

-

Combine the partial derivatives to form the gradient:

∇f(x, y) = (2x, 2y)

Therefore, the gradient of f(x, y) = x² + y² is (2x, 2y).

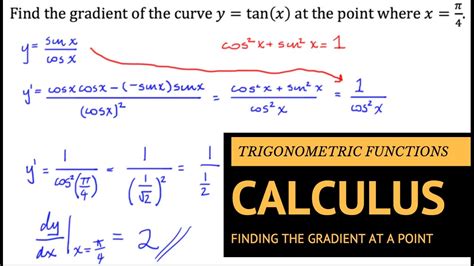

Example 2: A Function with Trigonometric Terms

Let's find the gradient of f(x, y) = sin(x)cos(y).

-

Partial derivative with respect to x:

∂f/∂x = cos(x)cos(y*)

-

Partial derivative with respect to y:

∂f/∂y = -sin(x)sin(y*)

-

Gradient:

∇f(x, y) = (cos(x)cos(y), -sin(x)sin(y))

Example 3: A Function of Three Variables

Let's consider f(x, y, z) = x²y + yz² + xz.

-

Partial derivative with respect to x:

∂f/∂x = 2xy + z

-

Partial derivative with respect to y:

∂f/∂y = x² + z²

-

Partial derivative with respect to z:

∂f/∂z = 2yz + x

-

Gradient:

∇f(x, y, z) = (2xy + z, x² + z², 2yz + x)

Geometrical Interpretation of the Gradient

The gradient vector is perpendicular to the level curves (or level surfaces in three dimensions) of the function. A level curve is a curve along which the function has a constant value. This means the gradient vector points in the direction of the steepest ascent of the function.

Imagine walking on a mountain represented by a surface z = f(x, y). The gradient at your current location points in the direction of the steepest uphill climb. The magnitude of the gradient indicates the steepness of that climb.

Applications of the Gradient

The gradient has numerous applications across various disciplines:

1. Optimization Problems:

Finding the maximum or minimum of a function is a fundamental problem in optimization. The gradient is crucial here because at a local maximum or minimum, the gradient is equal to the zero vector (∇f = 0). This condition forms the basis for many optimization algorithms, like gradient descent.

2. Machine Learning:

Gradient descent is a cornerstone of many machine learning algorithms. It involves iteratively updating the model parameters in the direction opposite to the gradient of the loss function to minimize the error. The gradient guides the algorithm towards the optimal parameters.

3. Image Processing:

Gradients are used extensively in image processing for edge detection. The magnitude of the gradient of an image's intensity function is high at edges, allowing for their identification and segmentation.

4. Physics:

In physics, the gradient is used to describe vector fields such as electric fields and gravitational fields. The gradient of a scalar potential function gives the corresponding vector field.

Advanced Concepts and Extensions

This section briefly touches upon some more advanced concepts related to the gradient:

1. Directional Derivative:

The directional derivative measures the rate of change of a function in a specific direction. It's given by the dot product of the gradient and a unit vector in the desired direction. This allows us to find the rate of change along any direction, not just the direction of steepest ascent.

2. Gradient Descent Algorithm:

The gradient descent algorithm uses the gradient to iteratively find the minimum of a function. The algorithm repeatedly updates parameters by moving in the direction opposite to the gradient, proportionally to the gradient's magnitude. Variations include batch gradient descent, stochastic gradient descent, and mini-batch gradient descent, each with its own strengths and weaknesses.

3. Hessian Matrix:

The Hessian matrix is a square matrix of second-order partial derivatives of a function. It provides information about the curvature of the function's surface, crucial for understanding the nature of critical points (maxima, minima, saddle points). The Hessian is used in optimization algorithms to determine the convergence rate and step size.

4. Gradient Ascent:

Instead of finding minima, sometimes we want to find maxima. Gradient ascent works similarly to gradient descent but moves in the direction of the gradient to find the maximum of a function.

Conclusion

The gradient is a powerful mathematical tool with broad applicability. This guide provided a comprehensive overview of its definition, calculation, geometrical interpretation, and numerous applications. Understanding gradients is crucial for anyone working in fields that rely on multivariable calculus, optimization, and machine learning. From finding the steepest ascent on a mountain to optimizing complex machine learning models, the gradient provides a fundamental pathway to understanding and solving a variety of problems. Mastering the concept of the gradient unlocks a deeper appreciation for the beauty and power of vector calculus. This foundational knowledge forms a bedrock for further explorations into advanced concepts in calculus and their diverse applications in science, engineering, and technology.

Latest Posts

Latest Posts

-

How Much Is 9 Ounces In Cups

Apr 04, 2025

-

Describe The Function Of The Lens

Apr 04, 2025

-

What Are The Two Strands Of Dna Held Together By

Apr 04, 2025

-

How Many Atoms Of Oxygen Are In H2o

Apr 04, 2025

-

Do Homologous Chromosomes Have The Same Alleles

Apr 04, 2025

Related Post

Thank you for visiting our website which covers about Finding The Gradient Of A Function . We hope the information provided has been useful to you. Feel free to contact us if you have any questions or need further assistance. See you next time and don't miss to bookmark.